Three Generations of Forum Software: How the meh.com forums (with lazy loaded images) are the fastest forums we've ever built

27Background

If you count the classic woot.com forums, the deals.woot.com forums, and the forums that power meh.com we're essentially on Forums 3.0.

When we started Mediocre Laboratories we tried really hard to not build our own forum software. We've been in contact with Jeff Atwood of codinghorror.com throughout the years and took a look at early versions of his up and coming Discourse project. It's certainly matured since that time, but among other challenges we had some issues getting our mediocre.com authentication system seamlessly integrated with an early alpha version of Discourse that Jeff and team were nice enough to get up and running in our Microsoft Azure environment.

There's a quote from Alan Kay that's always stuck with me. "People who are really serious about software should make their own hardware." One of the things we identified when starting Mediocre Laboratories was that we wanted to have community as part of the core experience of the ecommerce experiments we were going to build. It felt like if we were going to be serious about community we needed to make our own forum.

Long story short we built our own forums for meh.com. You're here reading this thing on them right now. They're not perfect, and we're constantly tweaking them, but they're the fastest forums we've ever built. I'd like to share with you how we recently improved the performance even further by adding a fancy implemenation of lazy loaded images.

Benchmarks

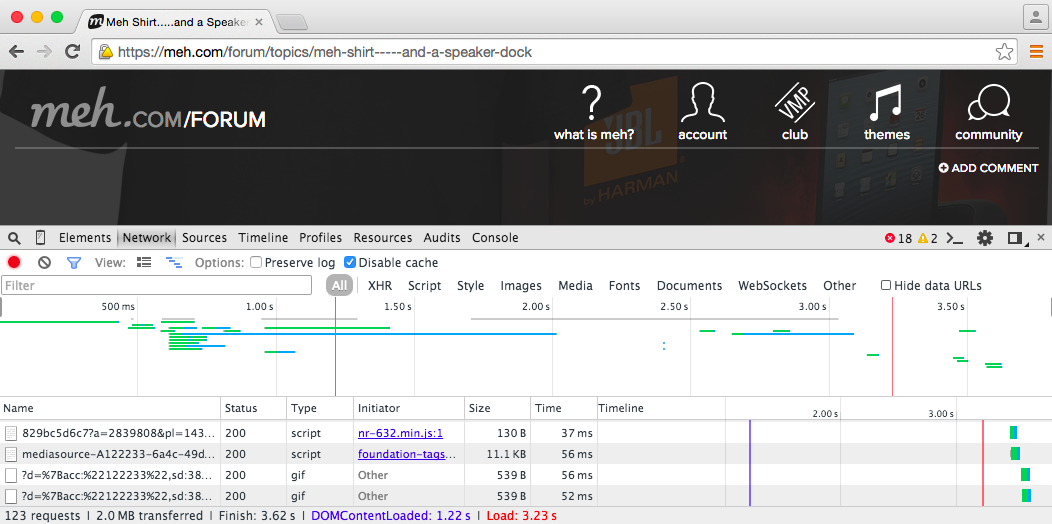

I'm going to compare all three generations of forum software using the standard developer tools that come out of the box with Google Chrome.

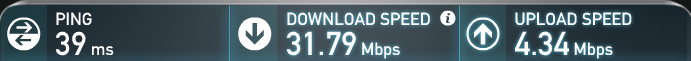

I'm simply requesting the same URL a total of 10 times with the cache disabled and writing down the DOMContentLoaded, Load, and Finish timings (measured in seconds). I tried to find some URLs that were at least somewhat similar in the amount of content displayed. I'm on my MacBook Air testing over my home WiFi. It's late afternoon and my kids are streaming a movie on Netflix.

This is hardly the most scientific way to run a benchmark, but it's real-world data. If you repeat this test at home I'm certain your numbers are going to vary widely.

woot.com forums

The original woot.com forums started as phpBB but quickly switched to open source forum software built on .NET which broke everyone's smilies. Over time, much like how Darth Vader is now more machine than man, we modified nearly every feature of the open source code to make it perform better until there was very little of the open source code left.

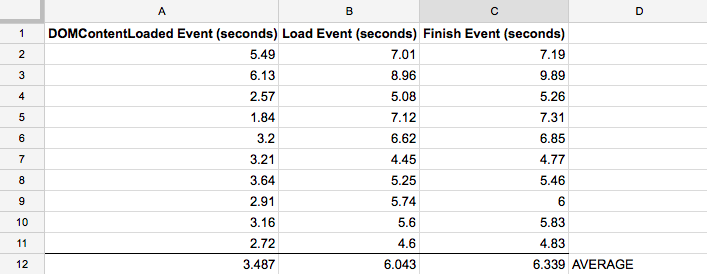

I took this URL (http://www.woot.com/forums/viewpost.aspx?postid=6300935&pageindex=1&replycount=136) and ran it through the benchmark.

Average of 6.339 seconds. This is the slowest benchmark we'll see here.

deals.woot.com forums

Deals.Woot was the first site we built "in the cloud" and had an all new set of .NET code we wrote from scratch.

In building it, we learned a lot about AWS (even before the Amazon acquisition).

I took this URL (http://deals.woot.com/questions/details/b5ee6efd-ca63-4b06-8610-fc76eae3bef5/what-do-you-think-about-the-new-answers-comments-changes) and ran it through the benchmark.

Average of 5.114 seconds. Getting faster.

meh.com forums

We went in a completely different direction with the meh.com forums. Changed from .NET to Node.js. Moved from AWS to Microsoft Azure.

The meh.com forums follow a microservices architecture where the meh.com app written with Express talks to an internal forum service that expose REST endpoints over HTTP using Restify. MongoDB stores the topic, comment, reply data after we process them with a flavor of Markdown we call mehdown. We use Redis for caching. InfluxDB stores clickstream data. There's a small amount of React on the front-end.

I hope I dropped enough web-scale buzzwords there for a proper Hacker News post.

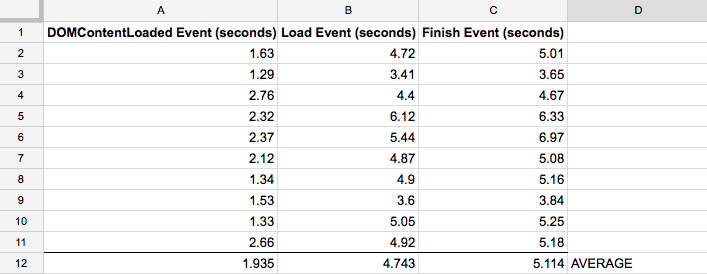

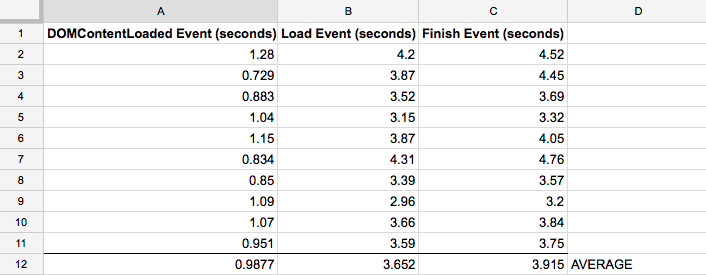

I took this URL (https://meh.com/forum/topics/meh-shirt-----and-a-speaker-dock) and ran it through the benchmark.

Average of 3.915 seconds. Neat.

One more thing...

The meh.com forum benchmark above was taken without a feature that we recently implemented that improves load times by not eagerly loading all the images in a topic when you load the page. Lazy loading isn't a new or innovative technique but we thought it would help, especially with long forum topics on mobile devices.

We spent a lot of time designing and implementing a responsive layout for our forums that renders well on mobile devices (something obviously lacking in our previous two forum system attempts). With good reason considering 40+% of our traffic is coming from smartphones.

We needed a way to reduce the download size of pages for bandwidth contained devices. The lazy (heh) way to implement this is to type "jquery lazy load plugin" into Google, slap a plugin into your code, then pat yourself on the back because you just made your site a little bit faster.

We started there and tested that exact approach on the internal version of our forum we use for company communication. Didn't work very well because our forum users are posting all kinds of images with a wide range of sizes. Some are short and fat. Others are tall and skinny.

The problem is, if you haven't already loaded the images you don't know how much space on the page to reserve while the image is loading. When you scroll down through the viewport the other content jumps around the page as the images are downloaded.

Here's an animated GIF that illustrates the issue (from: http://davidecalignano.it/lazy-loading-with-responsive-images-and-unknown-height/)

We solved this problem by adding a step to our Markdown parser when a topic, comment, or reply is posted. Before we add the content to the database we check for any image URLs and fetch their sizes using an awesome Node.js module named http-image-size. What I like most of all about http-image-size is that it only downloads the minimum amount of the image necessary to determine the image's size and then aborts the request. It provides the minimal amount of overhead possible to get the data we need.

Once we have the image sizes for all the topics, comments, and replies we can then reserve the correct amount of space on the page while the images are loading. This helps eliminate the other content jumping around the page.

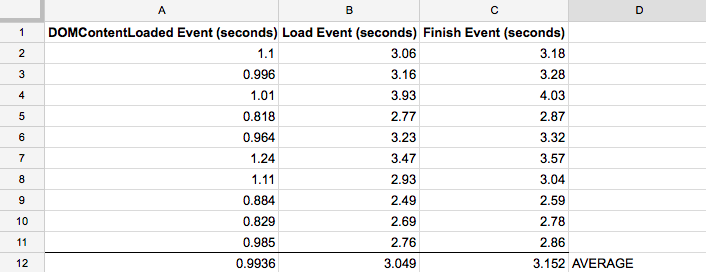

With fancy pants lazy loading in place, I took the same URL as before (https://meh.com/forum/topics/meh-shirt-----and-a-speaker-dock) and ran it through the benchmark.

Average dropped almost a full second to 3.152 seconds. Nice.

- 10 comments, 8 replies

- Comment

Interesting post, @shawn!

For the mobile data estimates, do you have actual data from the forum portion of the site or did you use the main page estimates, as seen in the image?

I suspect most scramble for the midnight dash on a smartphone but it's not always clear how they would use the forum portion. For example, I browsed at midnight on a tablet, but now I'm sitting at a computer.

Also, it's nice to see other followers of Jeff Atwood and Coding Horror, I think every software engineer can learn a thing or two from reading both his blog and JoelOnSoftware.

If you want, I'll show you my fizzbuzz for a TI-83+

@TaRDy You're right, the mobile percentage drops once you go from the homepage to the forums. 29.06% of our forum traffic is on a mobile device.

@TaRDy +1 for fizzbuzz shoutout

@TaRDy

That reminds me: I often find myself starting a forum post on a phone or tablet at midnight (Meh time, 11pm local) before moving to a desktop computer. Usually because I had to look something up or got frustrated trying to type forum markup. Auto-saving drafts would be delicious.

I don't follow either of their blogs closely, but as a software person I've read my share of articles by Jeff Atwood and Joel Spolsky. My exposure to their writing may not be representative, but it feels like Joel's posts are generally better-researched, while it's more hit and miss with Jeff (usually in areas outside his core experience).

Whoa. I'm not going to pretend that I understood all that, but congrats on the fastest forums yet!

Neat!

(Shame that time stamping doesn't work for videos)

Love the transparency in all of this. Thanks for posting.

@shawn, while I think I understood the basics of what you just said, from a user perspective, the meh forum clean look, feel, and speed just plain works great!

That is a tribute to you and your team. And your responsiveness toward tweaking "issues"/improvements blows away the norm.

I wonder whether any site responsiveness issues we might feel have more to do with tricky balancing of cloud server capacity and high user volume during peaks.

('Haven't spent much time in the Woot turf for at least 9+ months, but it seemed surprising that with all that Amazon cloud server horsepower available, their pages felt infuriatingly sluggish far too often.

I walk away from sites that are consistently slow to load. Popular Mechanics used to be the poster-child for me for slowwwww sites. Not sure if they are still that way. Why don't more sites realize they lose eyeballs simply due to page load sluggishness?)

@RedOak Sweet of you to say such nice things. I rarely find the Woot pages sluggish these days. There's still a wicked smart tech team over there and I know they pay attention to performance. They've probably got all the performance they're gonna get without a substantial re-write of their aged forum code.

Very cool and an interesting review of forum history. I'm glad I can say I've used each piece of it too! :)

Nicely done sir, this give us something to link to when people ask about the gray boxes in the forums. Thanks!

so speed is good and all but where is the pagination?

@communist I vote no.

@communist careful what you wish for. we could make that change and make everyone click next, next, next in order to see the latest comments.

Great stuff in here, @shawn. So, how do you like coding with node.js/express/mongodb as compared to traditional .NET MVC/IIS/SQL Server?

@jsh139 Pretty sure we could have gotten the same performance with either platform. Picking tools is complicated, but for one thing I simply needed a change from a career's worth of Visual Studio work which had gotten really heavy. I enjoy working with lighter-weight tools these days like Sublime Text, Atom. I love the NPM ecosystem. Seems like there's always several modules to pick from. Have you written any Node.js apps as a comparison?

@shawn that's cool. I haven't written anything more than a simple "hello world" node/express/mongo app. I've been immersed in the Visual Studio/SQL world as well (going on 16 years now) but I'm always open to newer constructs and frameworks if they are more performant or there is a real business need.

meh. sell me more stuff.